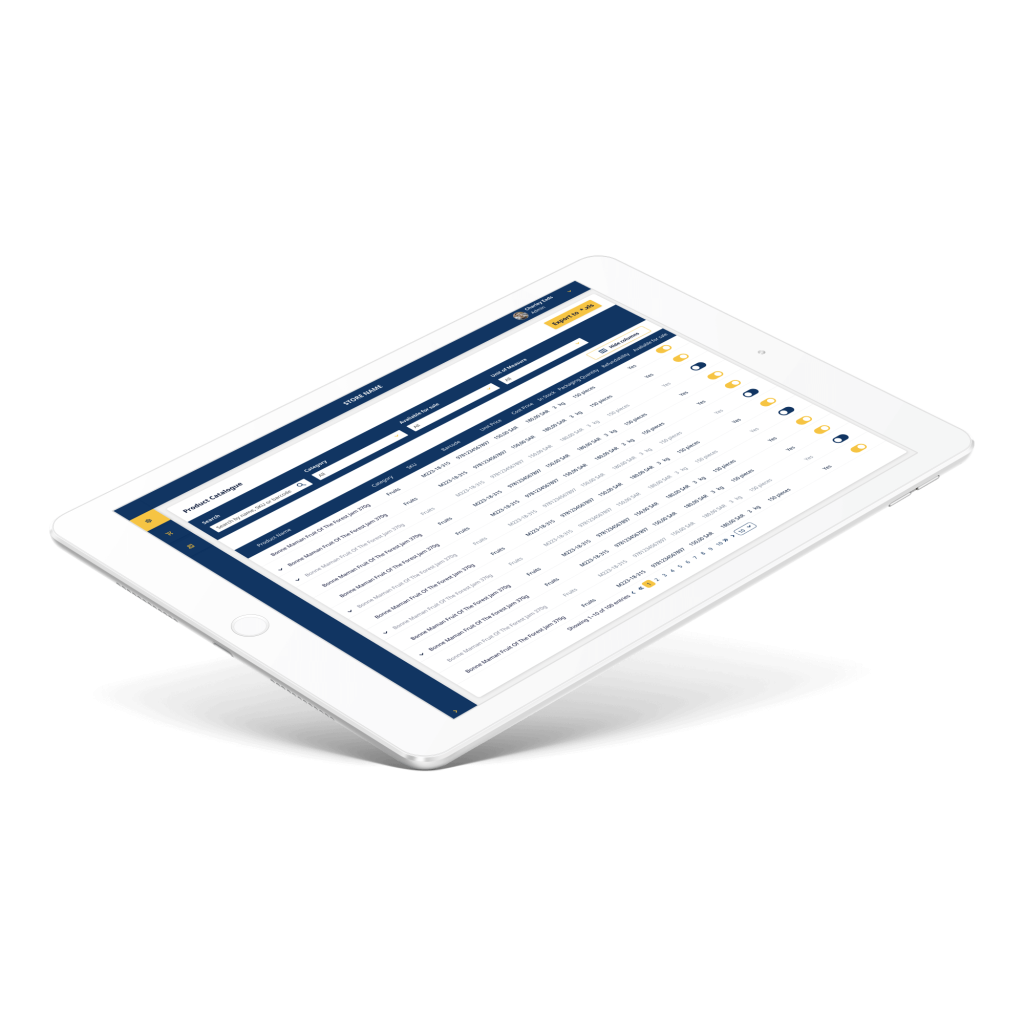

From our years of experience delivering software solutions for logistics, retail, and other industries that require warehouses for their operations, we understand the challenges our customers face daily. For instance, if you run a supermarket, your process consists of at least 4 major modules:

- Online order management & checkout.

- Order packaging.

- Incoming goods processing and warehouse logistics.

- Order delivery.

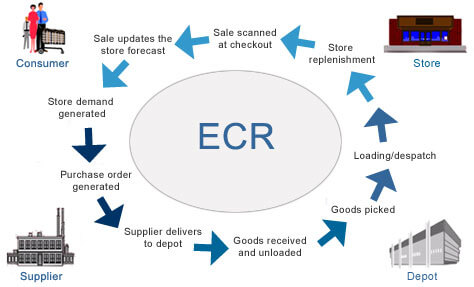

Here's an oversimplified graph of your flow:

If you relax for a minute, you could probably brainstorm several ideas of how Google Glass could be applied in warehousing.

But let's focus on 1 link on that picture: "Goods received and unloaded". If you break it down into pieces, it seems to be a rather simple process:

- Truck comes to your warehouse.

- You unload the truck.

- Add all items into your Warehouse Management system.

- Arrange all the items by putting them on particular places inside the warehouse.

But it gets complicated when you need to enter specific data manually: expiry dates, quantity, etc. We thought about optimizing person's performance during this phase. Wouldn't it be great, if you could just

- Pick up a box with both of your hands.

- Handle it to the shelf.

- Go back to the truck and repeat.

On the one hand, you have several spare seconds when you grab a box and handle it. On the other, Google Glass has a camera and voice recognition.

We combined both of the approaches, and that's what we've created:

Pretty cool, huh? Here you can see automation of all basic tasks we mentioned above. It is incredible how useful Google Glass is for warehouse automation.

In the next section, we're going to dive deep into the technical details so, in case you're a software engineer, you can find some interesting pieces of code below.

Working with the Camera in Google Glass

We wanted to let a person grab a package and scan it's barcode at the same time. First of all, we tried implementing barcode scanning routines for Glass. It's great that Glass is actually an Android device.

So, as usually, add permissions to AndroidManifest.xml, initialize your camera and have fun.

<uses-feature android:name="android.hardware.camera" /> <uses-feature android:name="android.hardware.camera.autofocus" /> <uses-permission android:name="android.permission.CAMERA" /> <uses-permission android:name="android.permission.RECORD_AUDIO" />

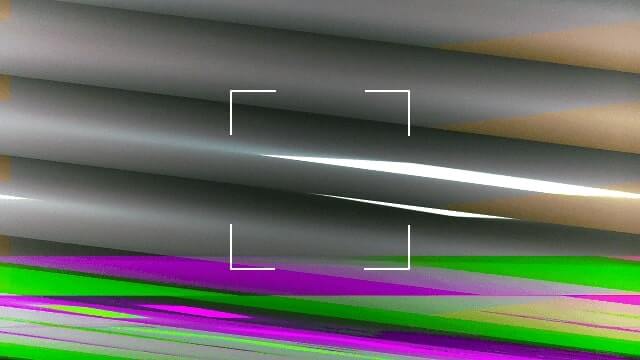

But wait, what the hell is going on? Why does my screen show something like this?

Glass is a beta product, so you need some hacks. When you implement the surfaceChanged(...) method, don't forget to add parameters.setPreviewFpsRange(30000, 30000); call. Eventually, your surfaceChanged(...) should look like this:

public void surfaceChanged(SurfaceHolder holder, int format, int width, int height) {

...

Camera.Parameters parameters = mCamera.getParameters();

Camera.Size size = getBestPreviewSize(width, height, parameters);

parameters.setPreviewSize(size.width, size.height);

parameters.setPreviewFpsRange(30000, 30000);

mCamera.setParameters(parameters);

mCamera.startPreview();

...

}

That's the way you can make it work.

P.S. Unfortunately, Glass has now just 1 focus mode - "". I hope, things will get better in the future.

Working with Barcodes in Google Glass

Once you see a clear picture inside your prism, proceed with barcode scanning. There are several barcode scanning libraries out there: zxing, zbar, etc. We grabbed a copy of zbar library and integrated it into our project.

- Download a copy of it.

- Copy the

armeabi-v7afolder and thezbar.jarfile into thelibsfolder of your project. - Use it with a camera:

Initialize JNI bridge for the zbar library:

static {

System.loadLibrary("iconv");

}

Add to onCreate(...) of your activity:

setContentView(R.layout.activity_camera); // ... scanner = new ImageScanner(); scanner.setConfig(0, Config.X_DENSITY, 3); scanner.setConfig(0, Config.Y_DENSITY, 3);

And create Camera.PreviewCallback instance like this. You'll scan image and receive scanning results in it.

Camera.PreviewCallback previewCallback = new Camera.PreviewCallback() {

public void onPreviewFrame(byte[] data, Camera camera) {

Camera.Size size = camera.getParameters().getPreviewSize();

Image barcode = new Image(size.width, size.height, "NV21");

barcode.setData(data);

barcode = barcode.convert("Y800");

int result = scanner.scanImage(barcode);

if (result != 0) {

SymbolSet syms = scanner.getResults();

for (Symbol sym : syms) {

doSmthWithScannedSymbol(sym);

}

}

}

};

You can skip the barcode.convert("Y800") call and the scanner would still work. Just keep in mind that Android camera returns images in the NV21 format by default. zbar's ImageScanner supports only the Y800 format.

That's it. Now you can scan barcodes with your Google Glass 🙂

Handling Voice Input in Google Glass

Along with the camera, Google Glass has microphones which let you control it using your voice. Voice control looks natural here, although around you would find it disturbing. Especially when it can't recognize "ok glass, google what does the fox say" 5 times in a row.

As you can remember, we want to avoid manual input of specific data from packages. Some groceries have expiry date. So, let's implement recognition of expiry date using voice. In this way, a person would take a package with both hands, scan the barcode, say the expiry date while handling a package and proceed to another package.

From the technical standpoint, we need to solve 2 issues:

- Perform speech to text recognition.

- Perform date extraction using a free-form text analysis.

Task #1 can be solved with Google Speech Recognition API in Android. In order to use it from Glass, you need to use default Android Intents:

Intent intent = new Intent(RecognizerIntent.ACTION_RECOGNIZE_SPEECH); intent.putExtra(RecognizerIntent.EXTRA_PROMPT, "Say expiry date:"); startActivityForResult(intent, EXPIARY_DATE_REQUEST);

And override onActivityResult(...) in your Activity, of course:

@Override

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

if (resultCode == Activity.RESULT_OK && requestCode == EXPIARY_DATE_REQUEST) {

doSmthWithVoiceResult(data);

} else {

super.onActivityResult(requestCode, resultCode, data);

}

}

public void doSmthWithVoiceResult(Intent intent) {

List<String> results = intent.getStringArrayListExtra(RecognizerIntent.EXTRA_RESULTS);

Log.d(TAG, ""+results);

if (results.size() > 0) {

String spokenText = results.get(0);

doSmthWithDateString(spokenText);

}

}

Once we have free-form text from Google Voice Recognition API, we need to solve task #2: get contextual information from it. We need to understand which date is hidden behind phrases like:

- in 2 days

- next Thursday

- 25th of May

In order to do this, you can either write your own lexical parser, or find a third-party library for that. Eventually, we found an awesome library called natty. It does exactly this: it is a natural language date parser written in Java. You can even try it online here.

Here's how you can use it in your project. Add natty.jar to your project. If you use maven, then add it using:

<dependency>

<groupId>com.joestelmach</groupId>

<artifactId>natty</artifactId>

<version>0.8</version>

</dependency>

If you just copy jars to your libs folder, you'll need natty with dependencies. Download all of them:

- stringtemplate-3.2.jar

- antlr-2.7.7.jar

- antlr-runtime-3.2.jar

- natty-0.8.jar

And use Parser class in your source:

// doSmthWithDateString("in 2 days");

public static Date doSmthWithDateString(String text) {

Parser parser = new Parser();

List<DateGroup> groups = parser.parse(text);

for (DateGroup group : groups) {

List dates = group.getDates();

Log.d(TAG, "PARSED: " + dates);

if (dates.size() > 0) {

return dates.get(0);

}

}

return null;

}

That's it. natty does a pretty job of transforming your voice into Date instances.

Instead of Summary

Glass is an awesome device, but it has some issues now. Even though it's still in beta, developing apps for it is exciting! Don't hesitate to contact us if you need any advice.

P.S. You can find full source code for this example here: https://github.com/eleks/glass-warehouse-automation

Related Insights