“Happy Botting” said Microsoft and people all over the world started creating their own intelligent helpers intended to make life easier and more fun. As first examples of the demo bots looked quite promising, we decided to experiment with this technology by ourselves.

Enough browsing, let’s talk!

When it comes to the user experience associated with websites, one of the first things that comes to mind is browsing; then, it is about clicking and scrolling. And no matter how smart and simple the user interface is, wouldn’t it be nicer if you could write a message or even talk to the website and receive an answer containing the information you needed. This user experience can be made real with the help of our bot assistant.

The new framework powers automated bots with a wide range of possible commands that they can execute. People are now able to perform multiple interactions with the bots using text messages or voice commands. The great advantage of bots is that they can be easily connected to other systems and services, enabling users to do things faster and more easily. Bots can be efficiently integrated in various areas, including workforce management, user support and others. With the help of smart assistants, people can apply for a leave, add events to a calendar, assign tasks to employees in task-tracking systems, etc.

When working with system interfaces, bots can also assist in approving various requests, reporting an equipment failure or searching for information on websites. In other words, a personal digital assistant can now do a lot of your repetitive daily work for you. Bots help save time and there is no need to download any additional apps or click through web pages.

We decided to try to implement the information search scenario with our own bot connected to our corporate website eleks.com. Our aim was to enable users to simply send a message or ask a particular question and make the bot comprehend it and answer back, providing the information the user was looking for.

So how can bot assistant actually understand us?

Microsoft’s Bot Framework provides a comprehensive set of tools for bot development. You can now create bots that understand what you are saying or writing and even respond back to you. Moreover, they can keep track of a conversation like humans do.

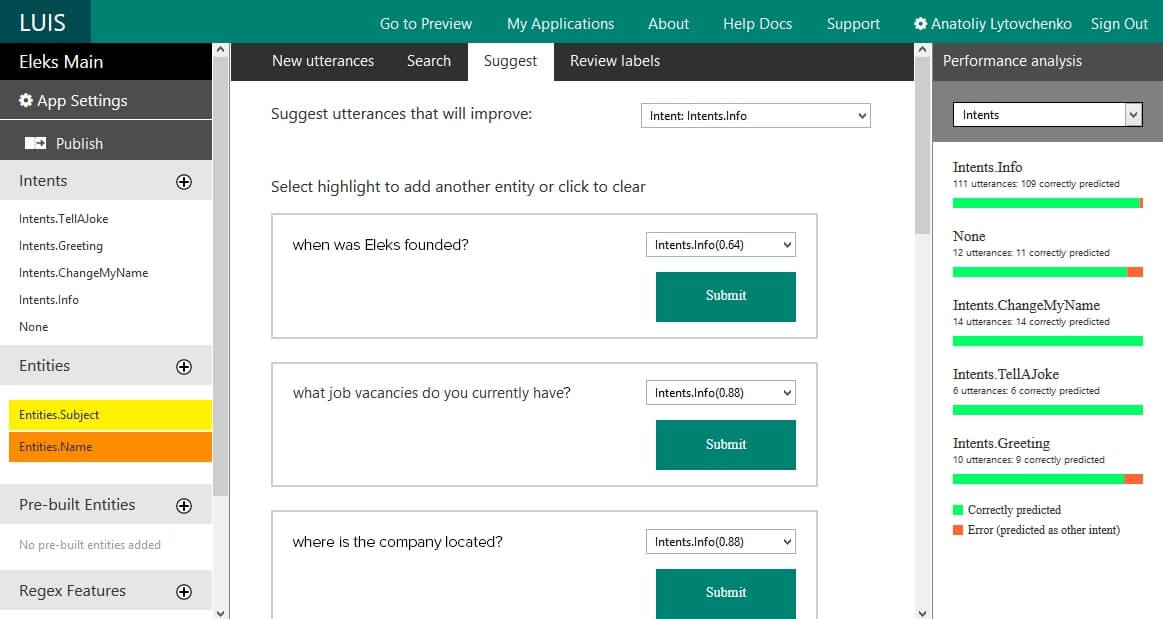

All these features are enabled thanks to Bot Framework’s native integration with LUIS - Language Understanding Intelligent Service (https://www.luis.ai/), allowing one to build language-understanding models. LUIS makes digital assistants smarter and provides them with voice-based commands. It means that users can communicate with bots in a more natural way, use their language, including mistakes and jargon expressions and still be understood and receive the information they need.

Another benefit we found about the LUIS interface is that it is convenient to use for both developers and language specialists. It took us about an hour to understand the key features and start using them. More complex actions can be done on the level of JSON, which LUIS can be exported to. So, the LUIS model, exported to the JSON format and saved as a text file, can be easily processed further or used for automated model retraining.

The development process

We started the application development process with data preparation. As we wanted our smart assistant to provide website users with relevant and accurate information, we had to ensure that the bot has a reliable source where that information can be found.

ELEKS’ corporate website eleks.com has all its information organized into separate blocks (< div > HTML tags, with structured data inside) from where we can have it extracted automatically and then processed further. For that purpose, we created a simple crawler that selects the blocks of needed information and organizes it internally into a simple dictionary.

Fig. 1. Data structure in the dictionary

Enabling language recognition

We used the LUIS model to enable our bot to understand the user’s language. We created a LUIS application to define the internal entities (also called parameters) that contain some text. Since we wanted our bot to return the most relevant information, we had to carry out a few sessions to train the developed LUIS model and teach the LUIS system to understand the text.

Unfortunately, we faced an issue: it was difficult for the bot to understand the context, so the bot assistant could not respond with the level of accuracy that we required. So, to make LUIS work for us, we had to create some very carefully crafted commands that would be easy to follow. These commands included the intents and the entities.

And now the conversation starts!

As a final step we created a .Net application, which linked both - the public api enabling communication with the bot and our LUIS model for text recognition. Technically, the main application is a .Net WebApi app with only one controller to simplify the usage.

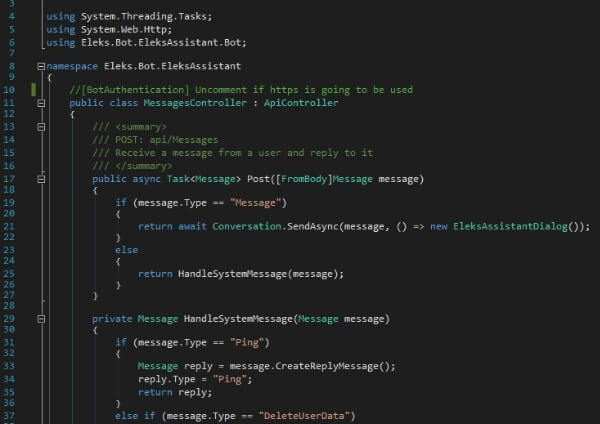

Fig. 3. Example of the code that handles user’s messages and system messages

The code defines a single method that handles both the user’s messages and system messages. We decided to use “conversation” as a method of communication between the bot and a user, awaiting for the requested information to be provided as an answer. Also, conversation method allows one to keep track of the previous requests that occurred in the course of the conversation, so that the bot can react with a higher level of accuracy. Here you can read about many other types of communication methods that you can use when developing your bot.

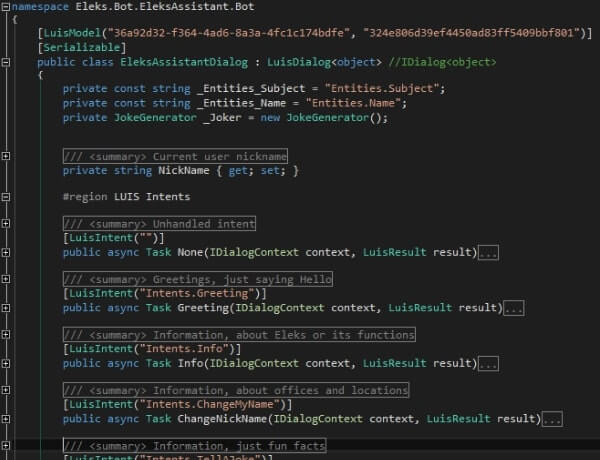

Fig. 4. The class that supports the logic of the conversation process

As you may have probably noticed, it contains a reference to the LUIS model as well as the Intents command that we mentioned before. The rest is all about the logic within the dialog and depends on your imagination only. To test your bot, you can use the Bot Framework Emulator.

So, is botting actually worth it?

What we found in the course of our experiment is that the automated bots can be quite good at handling Q&A sessions with a website user. Instead of searching through a long list of Question & Answers, the user can simply type a short message or articulate a question. The task that we set before our smart assistant at the beginning of an experiment was to understand written and spoken language and follow the logic in the dialogues with a user as well as to provide answers related to ELEKS’ location, services, contacts and any other company information that a potential user may ask about.

And we are quite satisfied with the results that we got. Our bot deals with the search tasks more quickly and produces relevant answers with a high level of accuracy, thanks to language recognition enabled by LUIS. There is no need to click through the website pages as the bot is powered by a voice command or a text message and this may be very convenient for a busy user. To make it easier for the users to interact with our bot, we created a simple mobile application with the help of Xamarin.

So, since with bots we can perform time-consuming tasks faster and easier, we believe that in the nearest future smart assistants are likely to become an integral part of the user experience in various areas. Our next steps will focus on creating a bot to assist with routine activities such as booking meeting rooms, reporting vacations, getting some statistical data etc. Also, we will continue updating our website assistant with some new functionality. We aim to give it some human-like features and skills to ensure an even more natural and seamless user experience. The most interesting is yet to come.

If you want to improve the online user experience for your customers, we are here to help. Get in touch.

Related Insights