For example, when a company publishes API over the Internet to interact with partners or directly with clients, the security services and audit become extremely important because any unauthorised activity can result in a security breach. Such a breach brings in the danger of financial loss and can potentially damage a brand’s reputation.

In this blog, we will share our vision of a scalable architecture model that allows to automate and orchestrate an organisation of any size, from SMB to a large enterprise. Our approach allows integrating your custom internal tools with third-party components to make them work as one seamless and secure ecosystem.

We will touch upon the key challenges of enterprise application integration, provide a comparative review of the open-source tools for business process automation available on the market now, and share some actionable insights on how to make it all work.

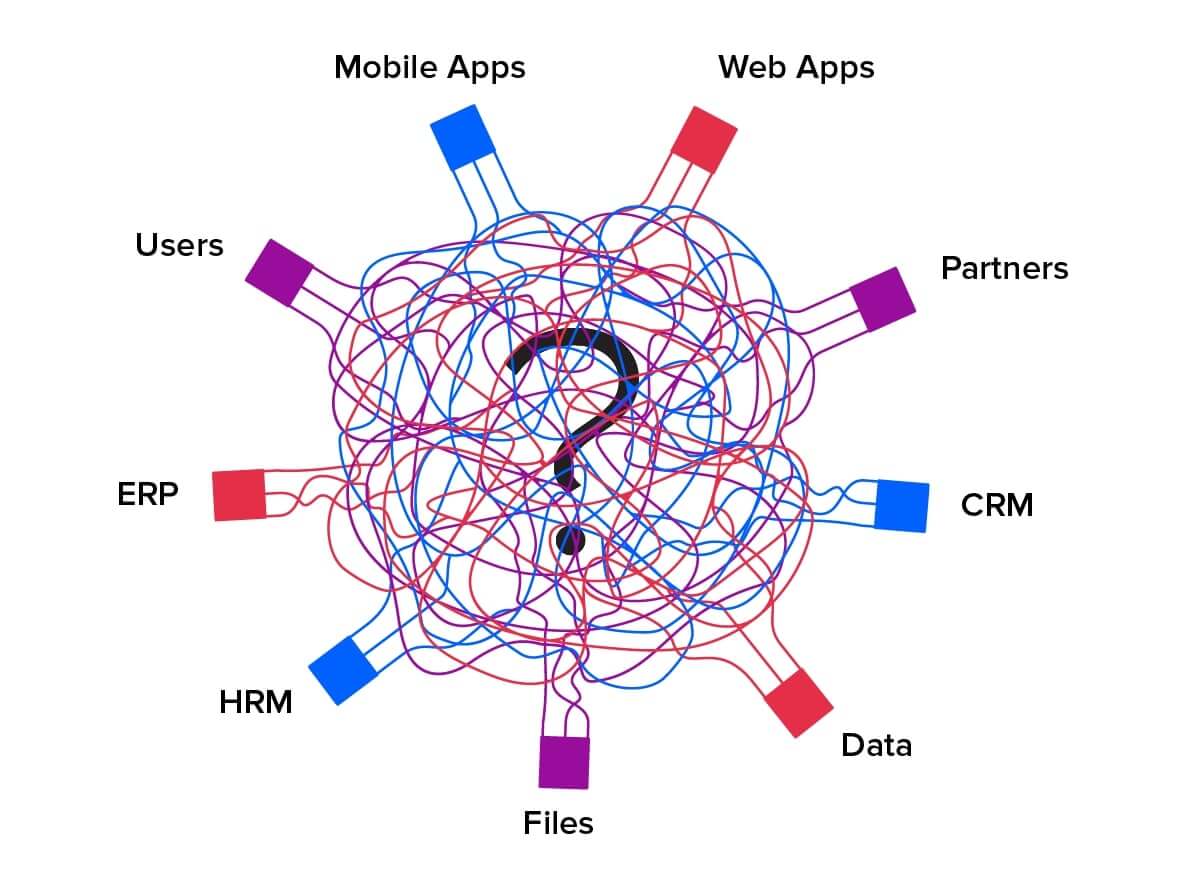

Enterprise application integration hurdles every organisation knows

Implementing efficient and secure system integration and business process automation for an enterprise that has a large number of IT systems is a challenging endeavour.

Usually, the first problem is that IT systems are using different protocols for communication. The second is security. Non-centralised users and access management lead to complexities in security management and a high possibility of human error.

The latter is the most pressing one when it comes to internal buy-in, which can result in limited budgeting — enterprise application integration projects are often considered very expensive and are often rejected or only partially implemented.

Here is an example of a business process that is rarely automated: the formal process of employee resignation. When an employee leaves a company there is always some formal procedure which one needs to follow: terminating accounts in various corporate systems, returning office equipment (corporate laptop, PC, etc.), as well as collecting signatures on relevant paperwork. This process is usually completely human-driven and requires multiple departments to step in at some point, such as HR, finance and IT.

There is always a risk of duplicated efforts or even a mistake that could cause extra time and resource spending, as well as some unnecessary stress. When it comes to taking a new team member on board, things look pretty much the same.

So why not automate this or many other similar formal processes? Well, here’s the challenge: a large enterprise usually has numerous internal legacy systems and tools which chances are, are fairly cumbersome and running on outdated technologies. Internal data often lives in Excel files with no shared access and unified updating process.

Does this sound familiar? Well, the solution to this problem comes in the form of an ERP system; however, such a solution is usually associated with a substantial cost. So how do you ensure you get the most out of your investment?

Today’s open-source market offers several mature, enterprise-level systems that can solve various business process automation problems. We will show you how to pick the right one and how to integrate it properly.

SOA and microservices in software architecture design

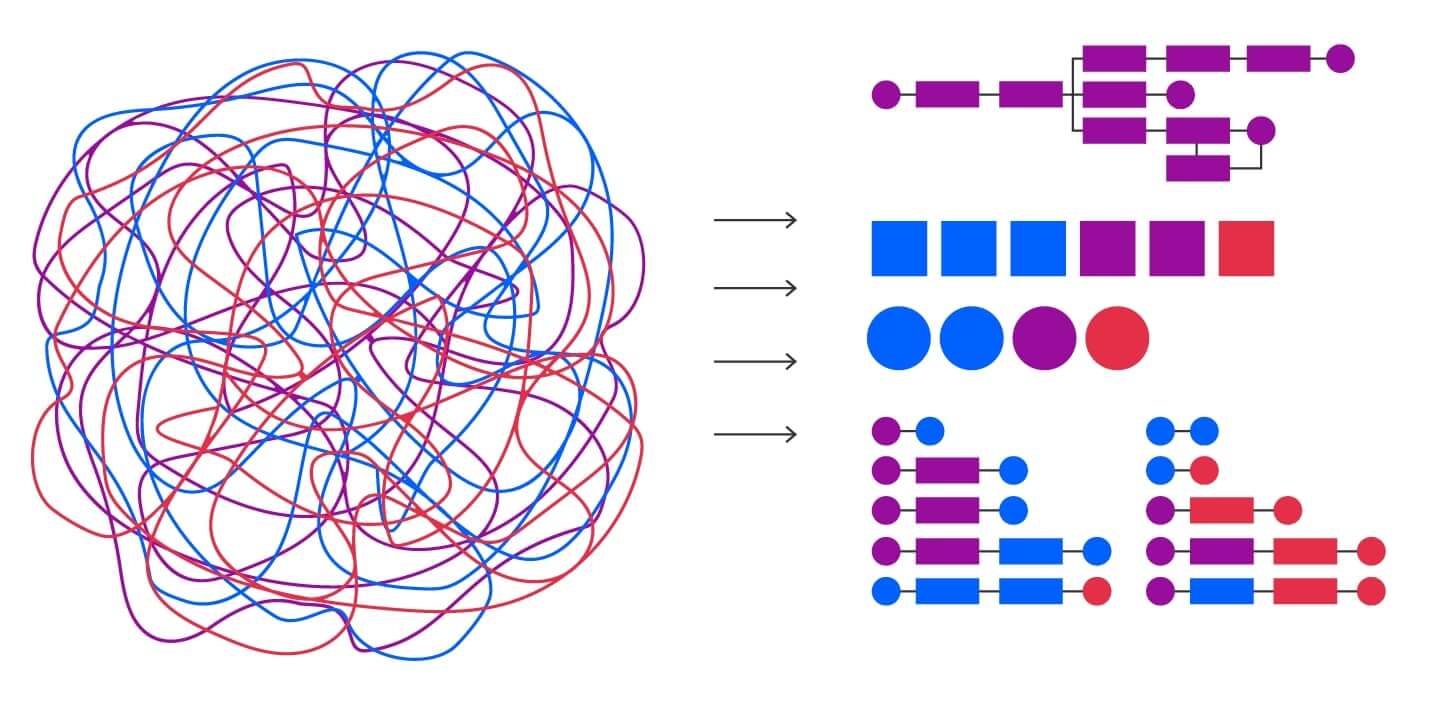

The common solution for business process automation is service-oriented architecture (SOA). SOA is an approach in software architecture design where system components provide services to other components within the network using a communication protocol. An SOA is independent of vendors, products and technologies. Each service is an individual functional unit that can be accessed remotely and acted upon and updated independently.

SOA approach can be extended using microservices to enable independent horizontal scaling of services, independent development and deployment, as well as an opportunity to substitute one service with another one with ease. Microservices also allow for a small codebase that decreases the number of conflicts and allows new developers to engage easily. And certainly, the growth of cloud solutions requires maintaining agility in architecture design.

Why use microservice architecture?

The main point of criticism for service/microservice architecture concerns the latency of network and message format transformation.

When we have a lot of IT systems and all the data and business functions are spread among the network, we have to manage data transfer through the network and deal with data formats. And in this case, the optimal solution is to create service/microservice architecture.

So, isn’t it better (faster, safer) to opt for functions/systems directly using the native protocol without any middleware services? In short, the answer is NO. Just imagine that there is a function, such as "get bank account info", in your core-banking system and it has five clients: mobile app, Web (Internet) banking, CRM system, chat/voice bot and a front-end app for bank employees.

If you ever want to add just one field to this function, you have to rewrite/recheck the code for five different systems. That's where microservices come in: they remove dependency and save your money for enterprise application integration.

The implementation process in detail

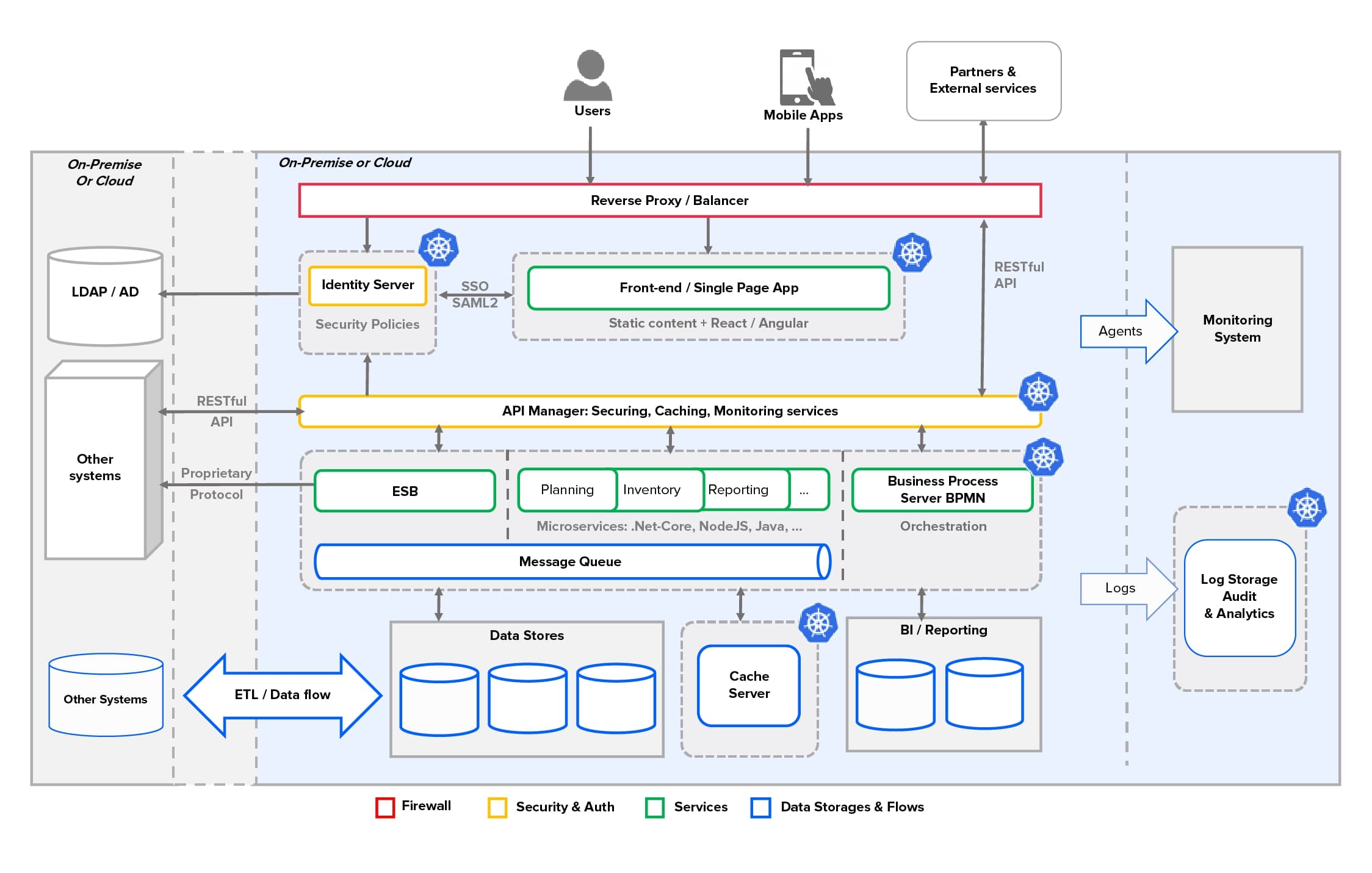

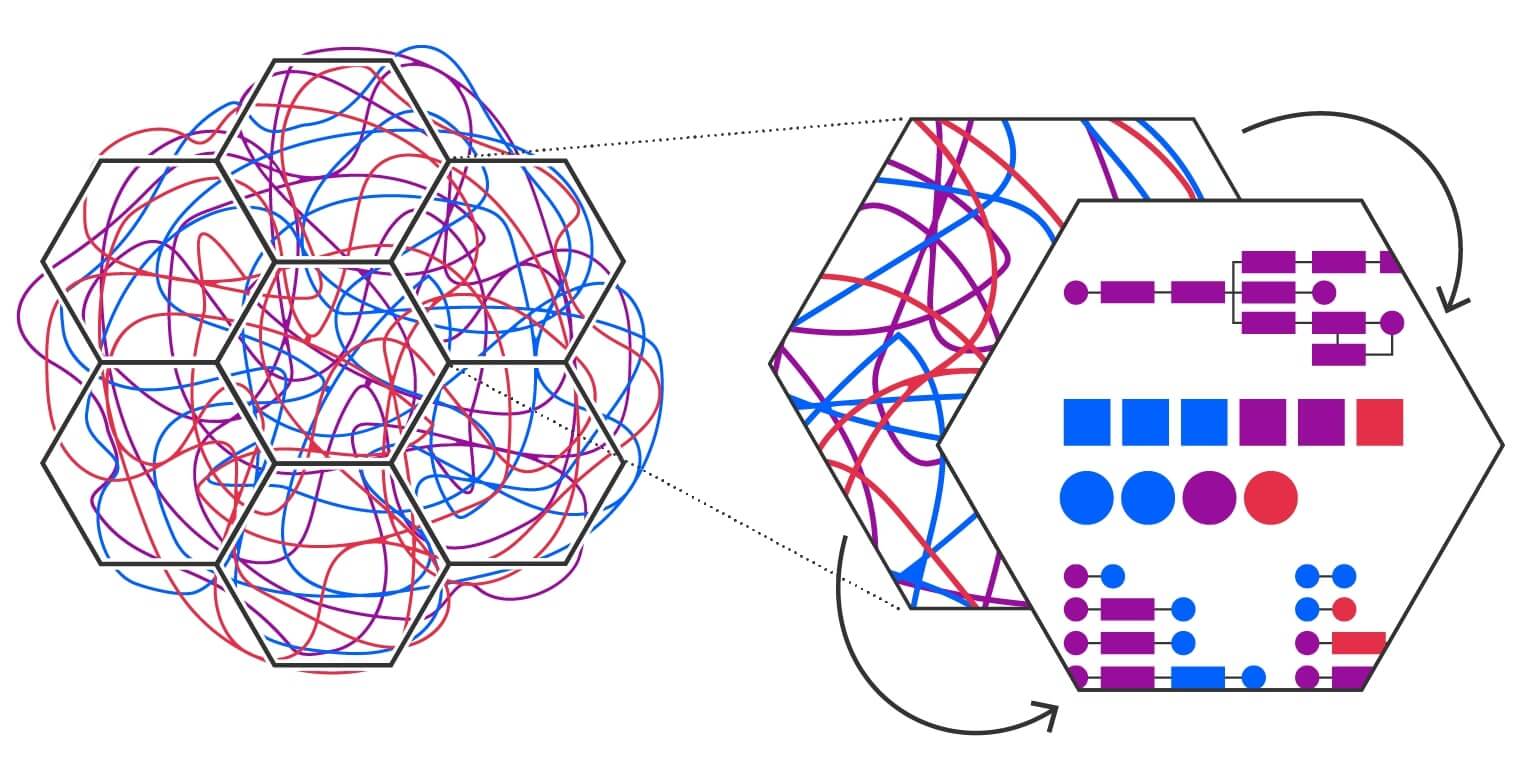

Usually, integration projects follow some major changes in business: mergers and acquisitions, expansion and diversification, etc. And after looking at different enterprises, we see almost the same setup of architecture components that are required for each case.

Architecture components

The below schema offers a general architecture that can be used to integrate and automate any enterprise; this approach can be applied to any cloud or deployed on-premises. It's also possible to use a hybrid cloud that can be extended by a cloud provider.

Main components and their functions in the architecture explained

| Reverse Proxy/Balancer | Acts as a primary firewall that restricts access from the Internet and intranet to sub-network where middleware is located. Could provide a balancer functionality, including legacy components that are not hosted under container orchestrator (kubernetes*). |

| Identity Server | Implements the SSO login process. Manages access policies. Handles several user stores. Provides role-based access functionality. |

| API Manager | Authenticates and authorises API requests from any client or device. A single point of audit logging. API and Application Lifecycle Management. API documentation and mocking. Standardise communication protocols, for example, to HTTPS/JSON. |

| Services/Microservices | Could be hosted in isolated containers and managed by container-manager (like kubernetes). This system could be easily scaled horizontally, and for microservices, even by a single service. It's possible that the components like BPS, ESB, Front-End are also considered as a service. |

| Message Queue | Transport for asynchronous and guaranteed message delivery. Helps to protect your enterprise systems and partner’s services from being overloaded. |

| ESB | In the modern world, microservices could be considered a deprecated technology, but it still provides a large set of connectors for different systems that allow exposing new services with configuration only. On the other hand, the new open-source cloud-native Ballerina language enables you to create integration services with minimal code. And each day it's extended with new connectors and features. |

| Business Process Server | Executes long-running stateful processes that enable user interaction. When all required business functions are covered with services, the cross-systems business process automation becomes an easy task. |

| ETL/Data Flow | Some business functions require a large amount of data transformation, synchronisation, delivery and historicization. In this case, it's possible to use modern visual tools that allow for the building of data flows using only configuration, and to expose it as a service or run it by a schedule. |

| Container Orchestration | System for automating deployment, scaling and management of containerised applications. The components that are usually managed by container orchestrator are marked with |

How to implement components

Some components could be omitted or reused from the current enterprise architecture; however, we try to compose the best (from our point of view) open-source component implementation scheme to minimise initial project investments and ownership costs.

As soon as we aim to integrate with the cloud, we use docker for containerisation and kubernetes as an orchestrator for containers, since these tools are market leaders.

| Group | Component | Tool/Solution | Details |

| (Micro)Services Architecture | Frameworks | Moleculer/NodeJS | Progressive microservices framework for Node.js. |

| Ballerina.io | Cloud-native programming language to write microservices that integrate APIs. | ||

| .NET Core | .NET Core is an open-source, general-purpose development platform that is cross-platform (supporting Windows, macOS and Linux). | ||

| Spring Framework | The most well-known Java framework that includes different enterprise application integration sub-projects such as Spring Cloud and Spring Data Flow. | ||

| Ratpack.io | Ratpack is a set of Java libraries for building scalable HTTP applications, tiny codebase, useful for groovy developers. | ||

| API Managers | WSO API Manager | An open-source approach that addresses full API lifecycle management, monetisation and policy enforcement. A central component used to deploy and manage API-driven ecosystems. | |

| Kong | Next-generation API platform for multi-cloud and hybrid organisations. | ||

| Legacy as Web / REST Service Service-to-Service Integration |

WSO2 Enterprise Integrator |

WSO2 Enterprise Integrator is an open-source product for cloud-native and container-native projects, enabling enterprise application integration experts to build, scale and secure, sophisticated integration solutions to achieve digital agility. Unlike other integration products, WSO2 Enterprise Integrator already contains integration runtimes, message brokering, business process modelling, analytics and visual tooling capabilities. |

|

| SSO, Access Policies | WSO2 Identity Server | WSO2 Identity Server is an open-source IAM product optimised for identity federation and SSO with comprehensive support for adaptive and strong authentication.

It helps identify administrators to federate identities, provide secure access to Web/mobile applications and endpoints, and bridge versatile identity protocols across on-premises and cloud environments. |

|

| RedHat Keycloak | Keycloak is an open-source Identity and Access Management (IAM) solution aimed at modern applications and services. It makes it easy to secure applications and services with little to no code. | ||

| Data Integration | ETL / Data-Flow | Apache NiFi | Apache NiFi supports powerful and scalable directed graphs of data routing, transformation and system mediation logic. |

| Pentaho | Quickly and easily delivers the data to your business and IT users — no coding or complexity required. | ||

| Message Queue | Rabbit MQ | RabbitMQ is lightweight and easy to deploy on-premises and in the cloud. It supports multiple messaging protocols and can be deployed in distributed and federated configurations to meet high-scale, high-availability requirements. | |

| Apache Active MQ | Apache ActiveMQ is the most popular and powerful open-source messaging server. | ||

| Business Process Automation | BPMN | Camunda BPM | An open-source platform for workflow and decision automation that brings business users and software developers together. |

| WSO2 BPS/Enterprise Integrator | Scalable and lean Business Process Server (BPS) helps to increase productivity and enhance competitiveness by enabling developers to easily deploy business processes and models written using BPEL and BPMN. | ||

| Alfresco BPM / Activiti BPM | Alfresco Process Services (powered by Activiti) is an enterprise Business Process Management (BPM) solution targeted at business people and developers. Includes native iOS and Android apps that help you operate your business processes. Not fully open-source, but almost all open-source BPM systems built on top of Activiti BPM. |

Cloud provisioning and deployment

While cloud services become more and more affordable, customers often consider changing their cloud provider because of a better price or higher performance. Often, they opt for a multi-cloud infrastructure to be able to choose the best service from different providers. This means our enterprise application integration approach needs to be cloud-agnostic and provide a universal solution that could fit any cloud.

Infrastructure as code is the best approach to achieve this goal, and our choice is Terraform as it allows you to safely and predictably create, change and improve infrastructure based on the declarative configuration files that can be shared among team members, treated as code, edited, reviewed and versioned. So, it’s a versioned infrastructure!

What’s also great about our method is that we use self-configured docker containers for testing and development project phases. Those containers have all layers except the last layer with the development artefacts. The last layer is self-deployed during the container start-up, which saves time for recompiling containers and allows us to store containers publicly on dockerhub since it does not contain any private information/artefacts.

Follow the below link to our GitHub repository where you can find a demo terraform project that creates a kubernetes cluster at AWS cloud with some components running on it. It will take you about ten minutes to create your cluster in the cloud! https://github.com/eleks/integration-platform

Enterprise application integration FAQs

1. What’s different about this approach and what are the benefits for my organisation?

There is no need to break up current systems and business processes to analyse and extend the existing infrastructure with the required components. We can build the desired service architecture, integrate data and automate cross-systems business processes with minimal investments for kick-off, development and ownership.

We understand that enterprises are very careful when it comes to changing current infrastructures because this process is often associated with substantial spending or can even cause potential loss — the best strategy here would be to work iteratively, focusing on the problems that require the least efforts and can potentially bring the most value.

In the beginning, the integration platform could act as an extension of the current architecture — the facade that hides real protocols and systems inside the enterprise. When we are adding new systems as a service to this façade, and subscribing clients to them, we can collect load and performance statistics and then estimate the cost and plan changes for services or infrastructure.

2. How much will it cost me?

We advise you to use open-source systems with Apache 2.0 license or close to it. This allows you to start a project with minimal costs. You pay only for hosting the required components in the cloud. We suggest you choose the tools with official support available as this ensures that the support of your architecture could be transferred easily to another provider for development or maintenance if needed.

3. How can I estimate the scope of work before I start implementing this architecture?

The total amount of work is not easily predicted. Even more, while collecting requirements and analysing current infrastructure, the information could be deprecated. However, our approach is not only about the technology stack but also the process and culture of future development for this kind of architecture, meaning that you can drive further projects even with just using your available resources. The best practice is to apply the agile methodology for all such projects.

To improve the accuracy of your estimate, try to split the entire project into small sub-projects. Estimate and execute your integration step-by-step. Below you can find an example of how the work can be estimated for a hypothetical project. This estimate is an average based on our experience with these types of projects. But, of course, the estimate largely depends on the scale of your current infrastructure and the availability of resources and expertise within your team.

- The initial analysis of the current architecture and the design of a new one can take around 15 days. At this stage, we analyse the landscape, the components of the high-level architecture, the reasons for the architecture changes, such as problems, limits and priorities. Also, several intermediate change steps are included here.

- Declaring the key rules for enterprise application integration architecture development: 5-10 days. Protocols, integration approaches for different cases, security, standards, etc.

- Provisioning of the platform with standardised components. With cloud available, it could take several days, and with the approved stabilised infrastructure, it’s a matter of hours.

- Connecting each new system: 2-15 days. It can take 15 days when standard connectors are unavailable for a particular system.

- Extending existing functionality with standard protocol and security (proxy service): 1-3 days. Adding API design and minimal enterprise application integration logic when the platform is stabilised is a work of 1 day or less.

- Expose complex service that hides several small functions: 3-5 days. Usually, working with several data sources.

- Implementing service for non-existent function: 3-5 days. There is no back-end system with this function, but we have all the resources for implementation.

- Long-running business process: 10 days. 30 steps (10 user tasks + 20 automated).

- CI/CD procedures for each type of service: 3-5 days.

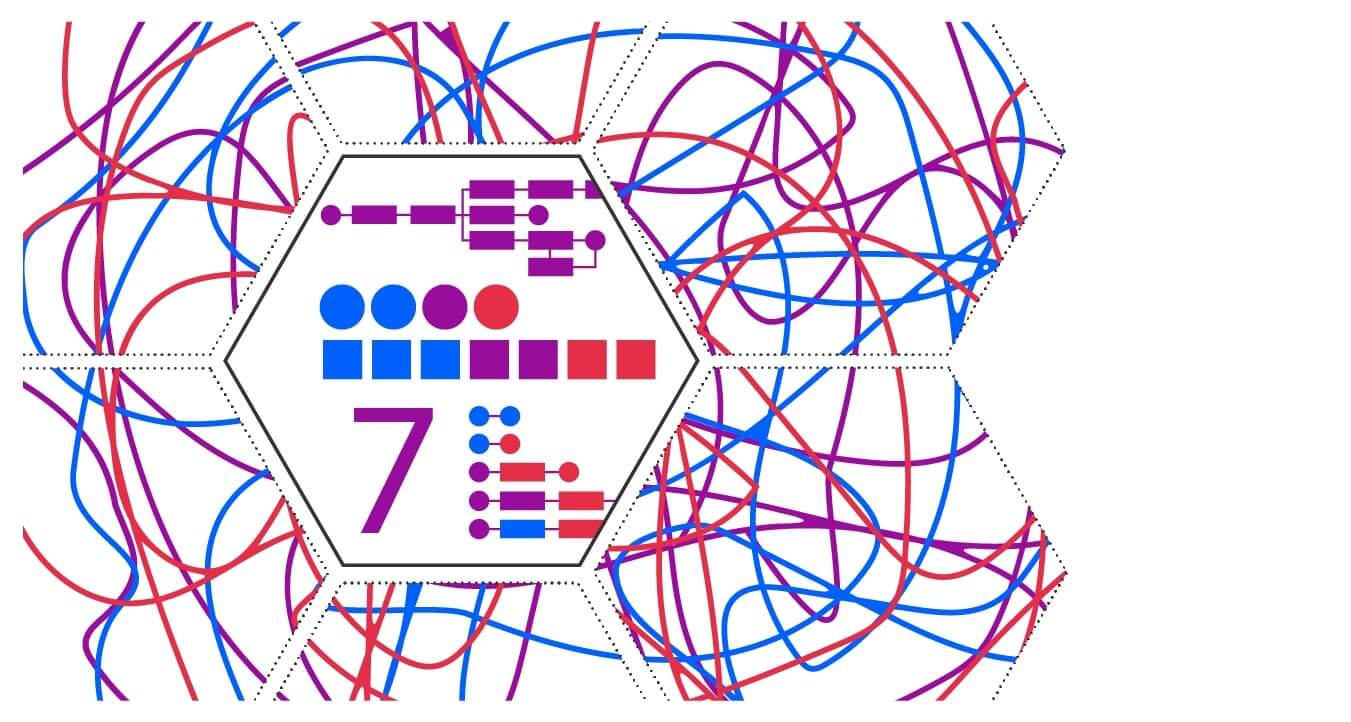

4. How many services do I need to implement?

So, how ’micro’ should the microservice be? What should the in/out parameters be? Should we implement ‘create’ + ‘update’ methods or just one ‘register’? 80% of the service development success depends on the correct API design. The success can be evaluated by the time that API stays unchanged.

All services must be easy-to-use from the client’s perspective. Also, the number of services is usually associated with the number of business entities. For example, if we are talking about a bank, then we will have the following services: cards, accounts, loans, deposits, currencies, etc.

This means we can precisely estimate the exact number of services for a specific business area, even if a few more need to be added during requirements collection.

5. Which services should I avoid implementing and why?

The worst case refers to databases or logical transactions: don’t put all your data into one big transaction.

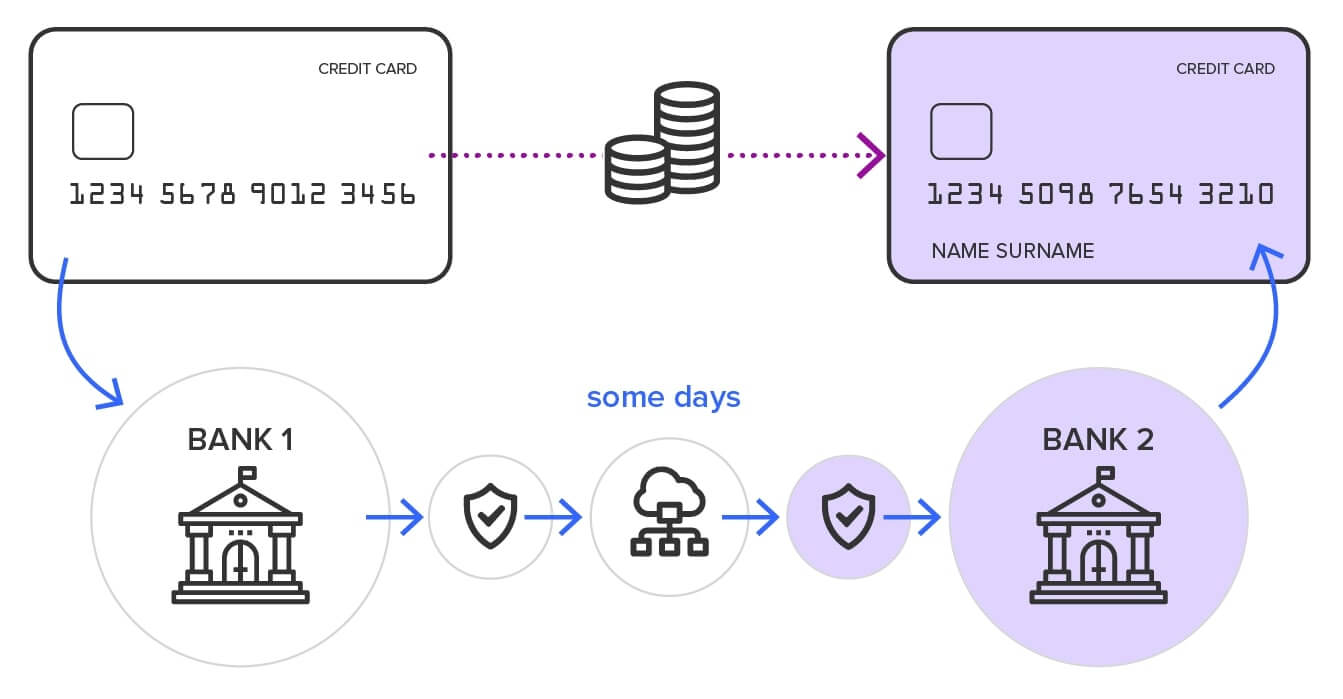

For example, today, the instant card-to-card money transfer between banks is a common operation. This operation consists of three steps: negative amount blocking on source card (the credit), positive amount blocking on destination card, and finally the transfer. The instant transaction happens because the first and second step are both quick; the last step can be postponed and could run for several days. If the last postponed step fails, then the money returns through a long-running process. In this example, we can sacrifice the transactionality to achieve the desired speed.

In most cases, the enterprise’s back-end system becomes a bottleneck for services; however, there’s no need to suggest an increase of resources because it's possible to handle the problem with middleware by using caching, queues or business process automation.

6. If my organisation is already undergoing changes, where should I start with the infrastructure change?

This case is quite common. Without changes in the business itself, nobody is going to change the infrastructure architecture. So, we have lots of ‘unknowns’: the scope of work, systems and communication methods, architecture in the changing state, etc. In these cases, it pays to remember what Archimedes said: “Give me the place to stand, and I shall move the earth.”

So, we need the pivotal point here since we can't count on systems as they are. Even the most stable systems could become instantly outdated. In our experience, we have had enterprise application integration projects where some systems had been changed two times during the active development phase.

Well, there is a workaround: the most stable part of the whole enterprise architecture is usually associated with the declaration of the APIs and policies of its development. For example, even if the core-banking system changes the parameters of deposit creating, services should stay the same according to the business needs. So, we start with the policies:

- The main communication protocol for all enterprise services

- The API documentation format. Swagger/OpenAPI (RAML, WSDL, etc.)

- The main services development languages and technologies. Often customers have its development/support teams that influence this decision.

- Security standards and requirements. Secure data transfer, authentication and authorisation protocols. A system that provides Auth* information.

- The rules for publishing and using services: naming conventions, versioning, calling, retirement, etc.

When we have a base to build on, we can start with service implementation by getting a high-level picture, starting small and taking incremental steps.

7. What’s the perfect outcome for the enterprise application integration?

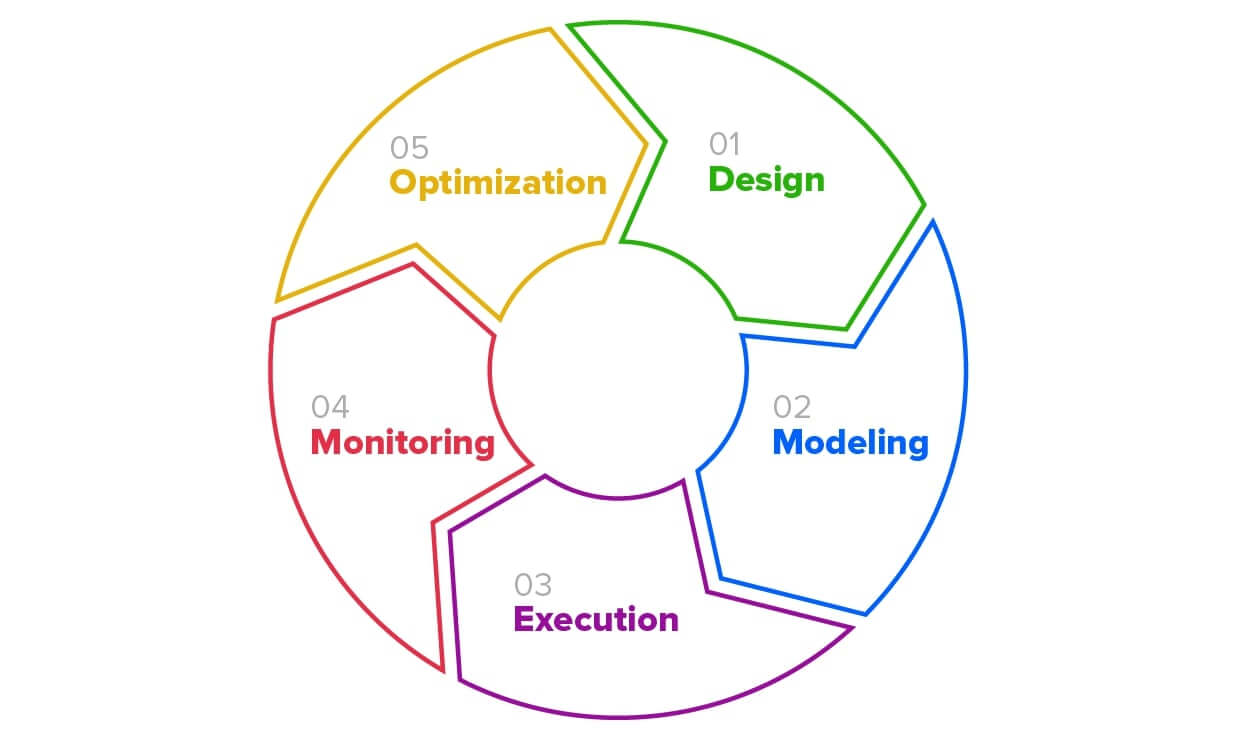

API Manager acts as a central point of documenting, designing, prototyping, engaging, publishing and securing all enterprise APIs. To design and prototype API, you don't need a developer anymore. Business Analyst could do this job using just a browser. As soon as all the business functions are exposed as APIs, the cross-system business process automation is just a matter of configuration. This could also be done by an analyst with minimal development engagement. All APIs and processes are documented, automated and can be easily changed.

With the help of monitoring, we can detect any problematic points in the whole platform and plan the corresponding changes.

Summary

Why do we need all this hassle with enterprise application integration? After all, an API Manager is available with all cloud providers as a part of the service: Apigee API Platform from Google, Azure API Management from Microsoft, API Gateway from Amazon, etc.

Unfortunately, those kinds of services do not have a common base in terms of development and configuration. And if you need to make the solution cloud-agnostic and completely independent from a cloud provider, or build your private cloud or multi-cloud, then the architecture described above is a game changer for you.

This enterprise application integration platform approach has been developed by our team of architects working on different integration projects, and it has been applied to several different enterprises. We are constantly monitoring the new integration systems outcomes and renewing the components.

If you have any questions about how to automate and integrate your business processes with maximum efficiency and optimal cost, we will be happy to answer them. Get in touch with us.

P.S. While we were working on these materials, Google announced that Apigee is now: Cross-Cloud API Management Platform

By Dmytro Lukyanov, Infrastructure Architect at ELEKS.

Related Insights