Neural networks now

Neural networks have made significant leaps in the image and natural language processing (NLP) recently. They’ve not only learned to recognise, localise and segment images; they’re now able to effectively translate natural language and answer complex questions. One of the precursors to such massive progress was the introduction of Seq2Seq and neural attention models – enabling neural networks to become more selective about the data they’re working with at any given time.

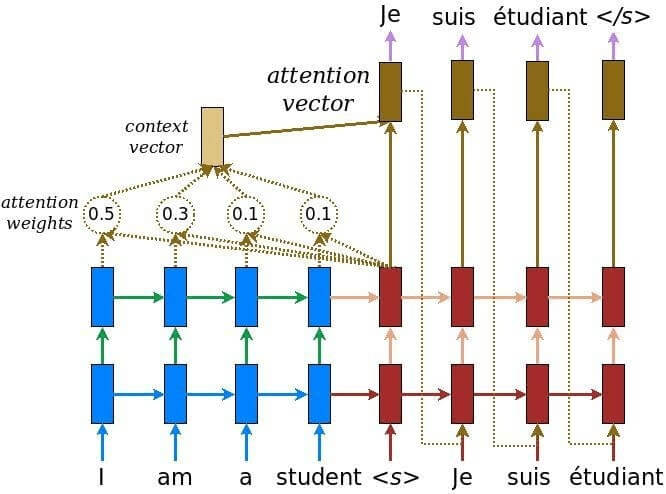

The core focus of the neural attention mechanism is to learn to recognise where to find important information. Here’s an example of a neural machine translation:

Source: Google seq2seq

The cycle runs as follows:

- The words from the input sentence are fed into the encoder to deliver the sentence meaning; the so-called ‘thought vector’.

- Based on this vector, the decoder produces words one by one to create the output sentence.

- Throughout this process, the attention mechanism helps the decoder focus on different fragments of the input sentence.

Neural machine translation’s current success can be attributed to:

- Sepp Hochreiter and Jurgen Schmidhuber’s 1997 creation of the LSTM (long short term memory) neural cell. This presented the opportunity to work with relatively long sequences, using a machine learning paradigm.

- The realisation of sequence-to-sequence (Sutskever et al., 2014, Cho et al., 2014), based on LSTM. The concept being to “eat” part of a sequence and “return” another.

- The creation of the ‘attention mechanism’, first introduced by Bahdanau et al., 2015.

But why is this so technologically important? In this blog, we describe the most promising real-life use cases for neural machine translation, with a link to an extended tutorial on neural machine translation with attention mechanism algorithm.

Seq2Seq algorithm’s real-world applications

The Seq2Seq algorithm can perform several core tasks, all of them grounded in ‘translation’ but each with distinct differences. Let's take a closer look at some of them.

Neural machine translation

Machine translation took a huge step forward in 2017, with the introduction of a bidirectional residual Seq2Seq (sequence-to-sequence) neural network, complete with an attention mechanism. The mechanism’s role is to determine the importance of each word in the input sentence, then to extract additional context around each word. It’s thanks to this development that modern tools are now able to produce high-quality translations of lengthy, complex sentences.

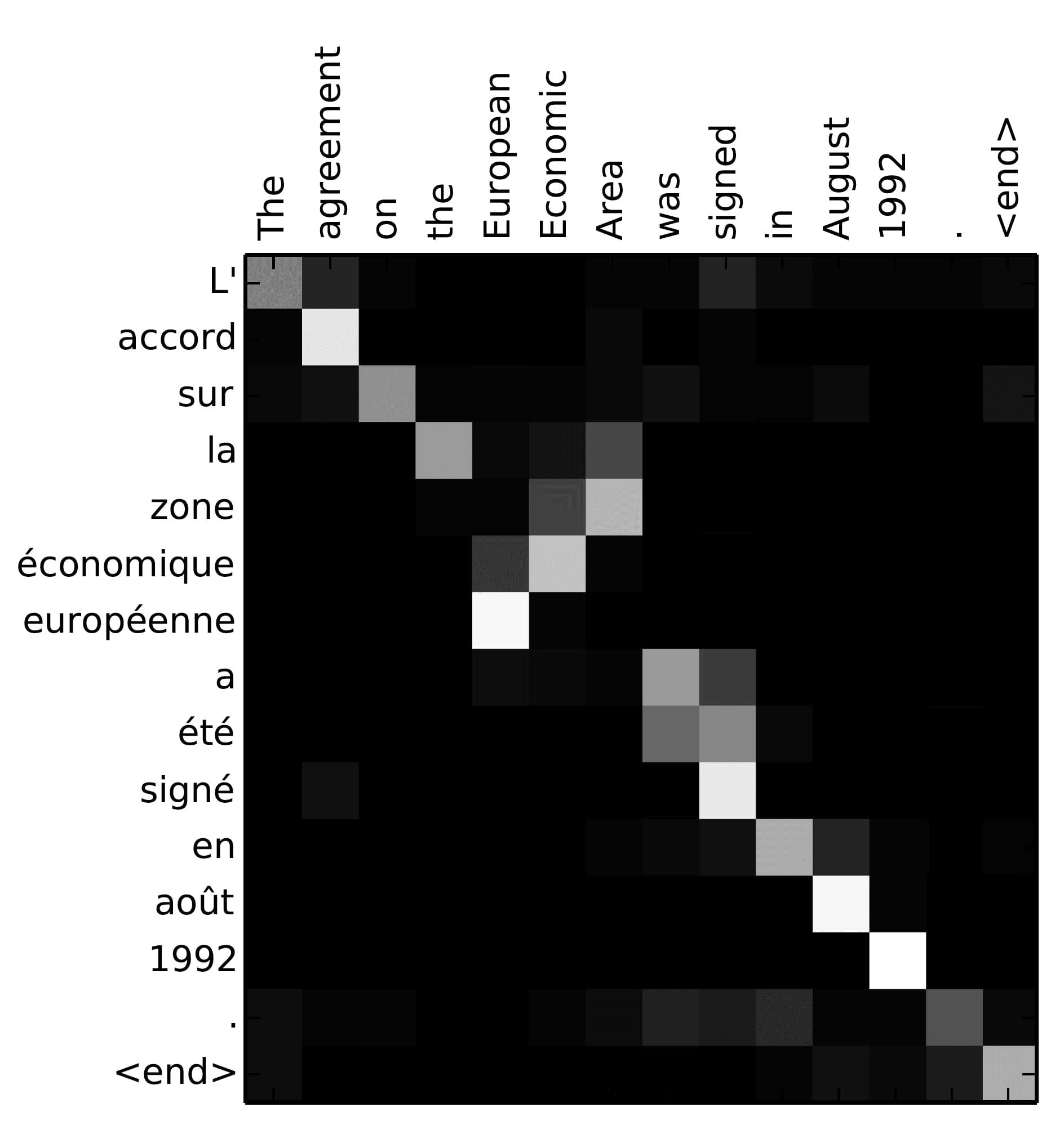

Source: TensorFlow seq2seq tutorial

We can peek under the hood of Google Translate, for one of the best illustrations of neural attention in practice.

The above is a prime example of the distribution of attention when the neural network translates English into French. The decoder’s language model and the attention mechanism were taught the correct word sequence in the output sentence.

Source: Bahdanau et al., 2015

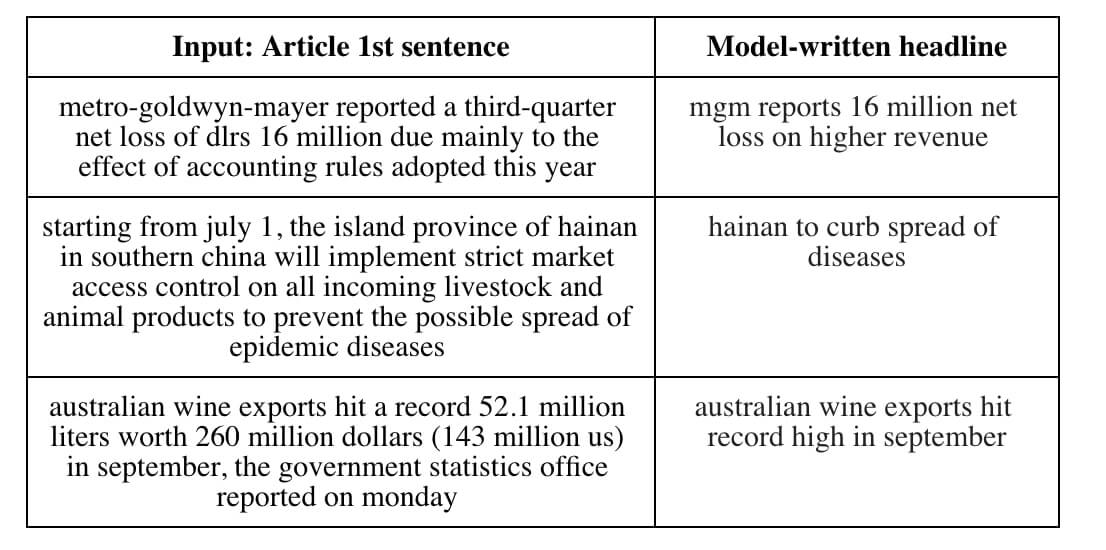

Text summarisation

Annotating text and articles is a laborious process, especially if the data’s vast and heterogeneous. Attention models can be used pinpoint the most important textual elements and compose a meaningful headline, allowing the reader to skim the text and still capture the basic meaning. What’s more, text summarisation can do this almost instantly. And it can be used to generate titles for web pages and perform high-level information research, or information segmentation, for rapid reading.

Chatbots with a question-answering capabilities

In the constant quest for efficiency, businesses are trying to automate as many routine processes as possible. As yet, however, the perfect tool for human-machine interaction hasn’t been created. Natural language processing (NLP) isn’t yet flawless but, with the addition of the attention mechanism, its accuracy is greatly improved.

An attention mechanism can detect the most significant (key) words from all kinds of questions – even those that are lengthy and complex – to produce the right answer. And the mechanism can be implemented as an add-on, to work in conjunction with the neural network on the common knowledge base. With chatbots, the mechanism transcends machine translation and takes on a higher level of abstraction – allowing it to translate one verbal sequence into another.

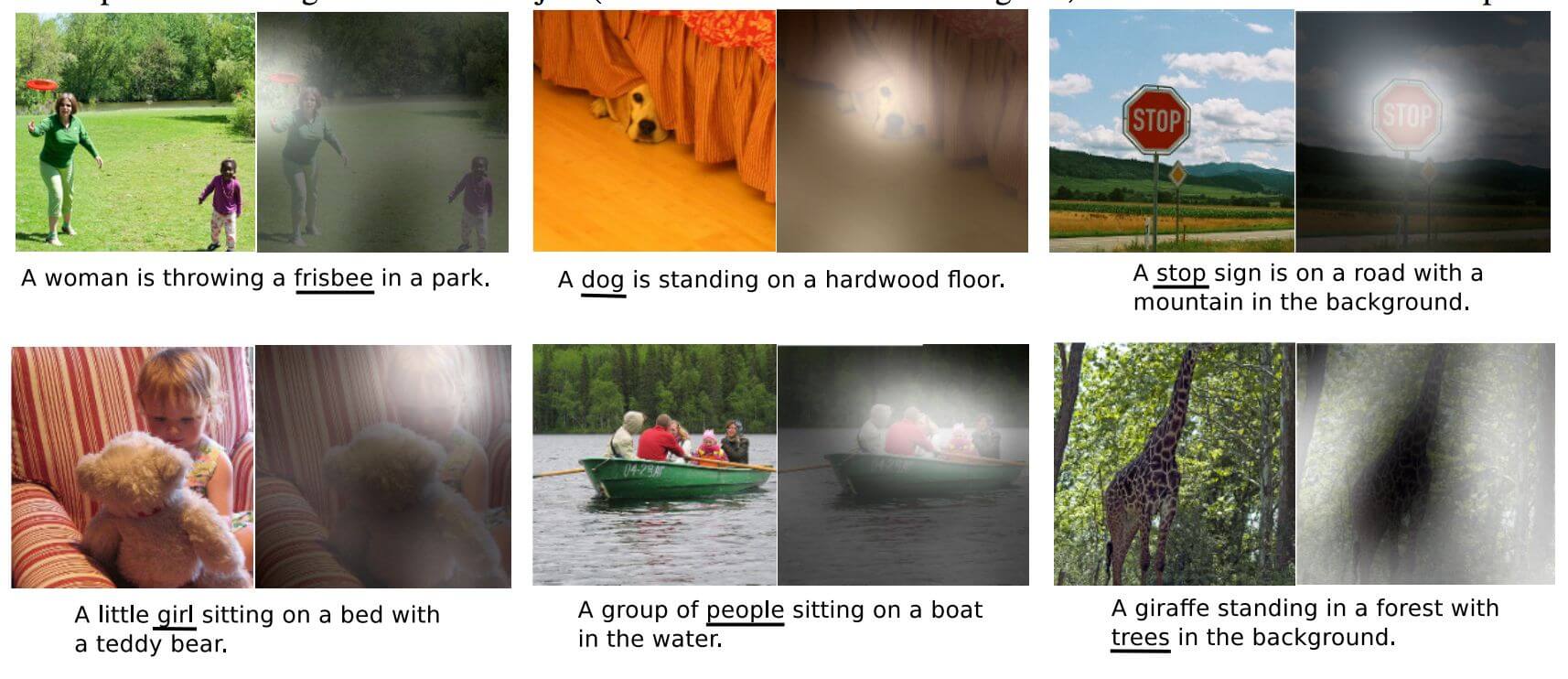

Natural language image captioning (Img2Seq)

The idea here is the same as it is for image recognition. The difference, however, is that to caption the image the attention heat map changes, depending on each word in the focus sentence.

A neural network can ‘translate’ everything it sees in the image into words. The above example shows us how the network distributes its attention while formulating the description.

This image captioning functionality has big practical potential in the real world; from automating hashtags and subtitle creation, to writing descriptions for the visually impaired – even producing daily surveillance reports for security firms.

CAPTCHA solving

The attention mechanism turned out to be hugely successful in solving CAPTCHAs based on recognition and segmentation of noisy/distorted pictures, with subsequent text input. This functionality finds a potential application with chatbots that have to parse third-party sites to answer a query.

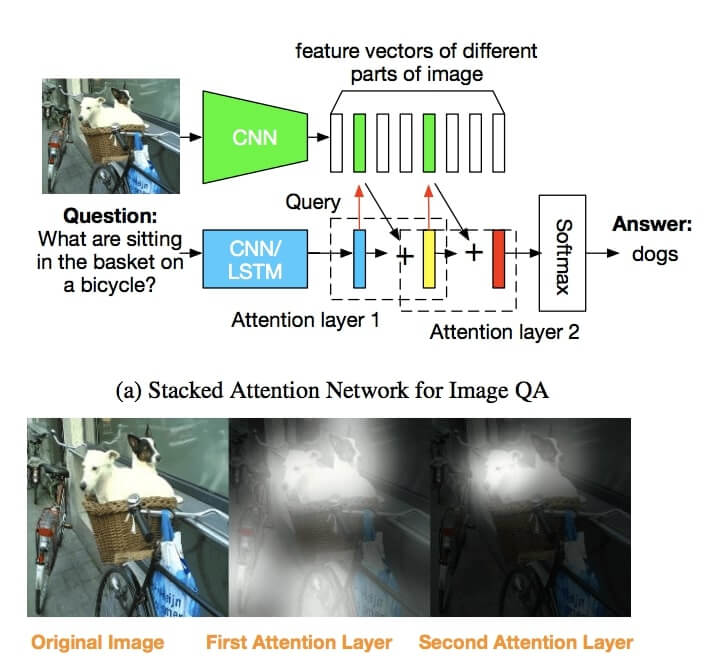

Image-based question-answering systems

Like conventional linguistic question-answering systems, image-based question-answering functionality takes a natural language input but, instead of accessing the knowledge base, it uses the attention mechanism to find the answer within the image.

TensorFlow neural machine translation Seq2Seq with attention mechanism: A step-by-step guide

There are many online tutorials covering neural machine translation, including the official TensorFlow and PyTorch tutorials. However, what neither of these addresses is the implementation of the attention mechanism (using only attention wrapper), which is a pivotal component of modern neural translation.

Here’s the link to our tutorial on neural machine translation, based on modern Seq2Seq with attention mechanism algorithm built from scratch. By comparison to what’s out there, this should offer an in-depth overview of all aspects of seq2seq, including attention algorithm.

We used the TensorFlow framework to offer a usable, low-level working example of the concept, based on the Dynamic Seq2Seq in TensorFlow tutorial. And we aim to make it as good as the original PyTorch version.

We hope this will help you get the most out of your machine translation projects and, ultimately, pay dividends for your outcomes. Feel free to leave any questions and feedback in the comments box below.

By Michael Konstantinov

Deep Learning Specialist at Eleks

Related Insights